ResearchLab

Explore our research papers, strategies, books, and market insights from TA Quant's research team.

In The

News

TAQUANT Surpasses $500M in Monthly Trading Volume

TAQUANT's algorithmic trading platform has crossed the $500 million monthly trading volume milestone, driven by growing adoption of its automated strategy marketplace and institutional API.

Read MoreResearch

Research Papers

TAQUANT WHITEPAPER

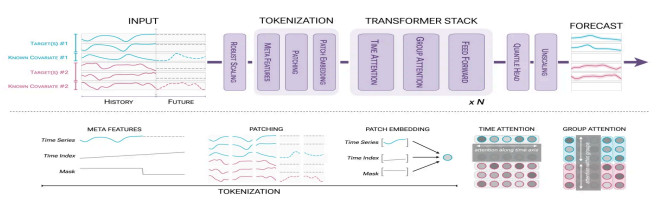

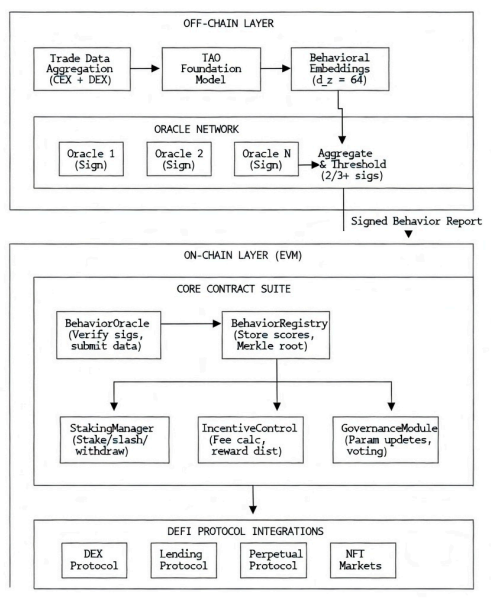

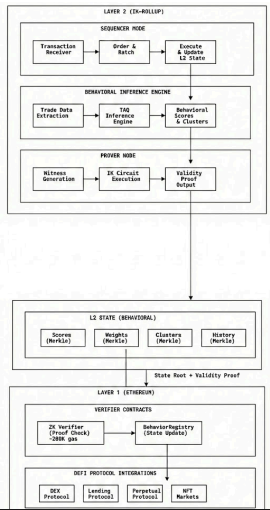

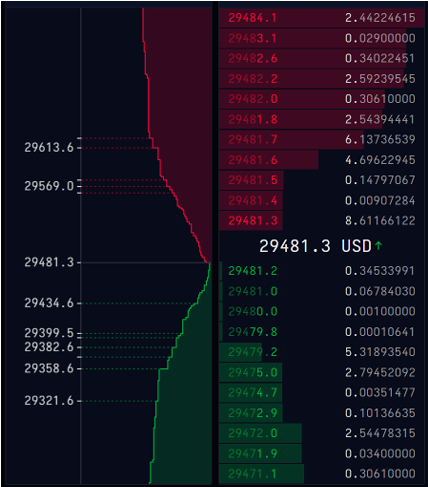

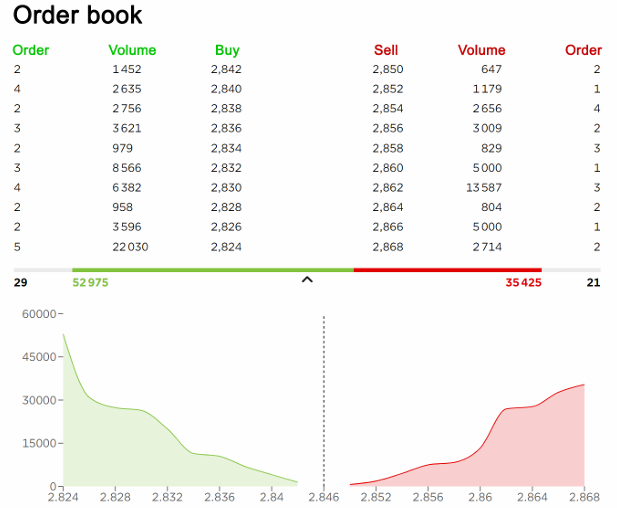

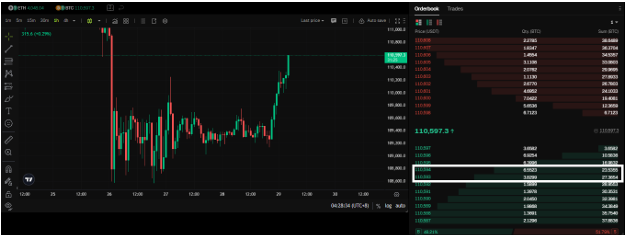

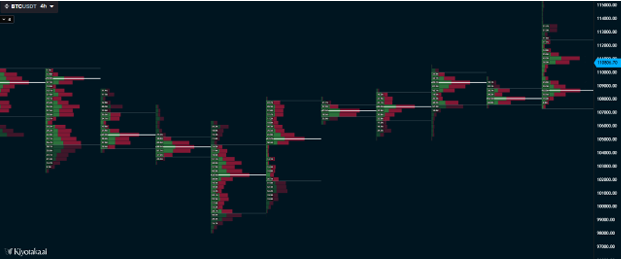

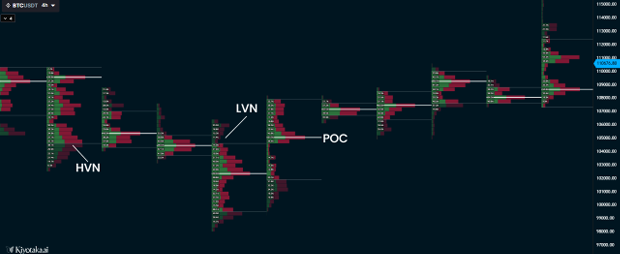

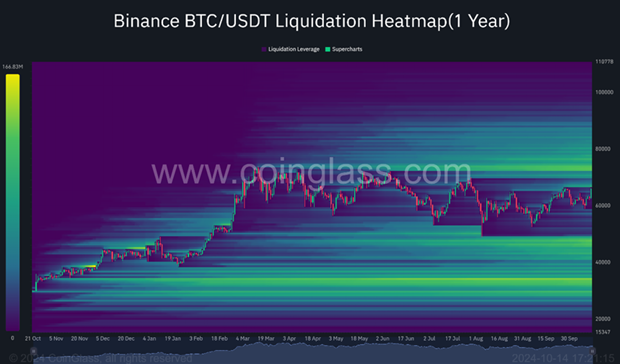

# TA QUANT **TECHNICAL WHITEPAPER** **Institutional-Grade Crypto Trading Infrastructure** **Execution - Intelligence - Attribution - Automation** **March 2026** <10ms 99.94% 900+ OPS 5 Agent Classes **Execution Latency** **Uptime (Beta)** **Rust Orders/sec** **AI Taxonomy Depth** [taquant.com](https://taquant.com) ## Abstract This whitepaper describes the complete technical architecture of the TA Quant platform. It is intended for technical readers - engineers, quantitative researchers, and technology leaders at trading firms, exchanges, and institutional counterparties - who require a detailed understanding of how the platform is designed, what guarantees it provides, and how its components interact. TA Quant is a four-layer, closed-loop trading infrastructure platform. The Execution Layer (Terminal) provides deterministic, multi-exchange order lifecycle management built in native Rust. The Intelligence Layer (TA Quant AI) is a coordinated multi-agent system that replicates the functional architecture of an institutional quantitative trading desk. The Distribution Layer (TA Syndicate) is a performance-attributed marketing and KOL management infrastructure with verifiable full-funnel attribution from campaign exposure to executed on-exchange volume. A dedicated AI Strategy Agent (AISA) provides fully autonomous, policy-constrained trade execution for users who require a hands-off trading experience. The platform is engineered around five non-negotiable architectural principles: separation of concerns across all layers, deterministic behaviour at the execution level, ensemble strategy management at the intelligence level, fraud-resistant attribution at the distribution level, and continuous closed-loop learning between all components. These principles are enforced architecturally - not by convention. Performance data from the 90-day beta: 99.94% system uptime, sub-10ms internal routing latency, 4.2 basis points average slippage on liquid perpetual pairs, and measurable AI learning convergence across execution heuristics. All figures are from live system operation. ## Part I: System Philosophy & Architectural Principles ### 1.1 The Integration Thesis The fundamental technical insight underlying TA Quant's architecture is that execution quality, trading intelligence, and growth attribution are not independent problems. They are the same problem expressed at different system layers. Every platform that treats them independently creates integration seams that degrade performance, introduce latency, and prevent the data feedback loops that produce compounding system improvement. TA Quant is designed as a single integrated system with interconnected layers that share data infrastructure, authentication state, and execution context. The absence of integration seams is not a user experience decision - it is a technical requirement for the feedback loops that make the system improve continuously with use. ### 1.2 Five Core Architectural Principles **Separation of Concerns at Layer Boundaries** Each system layer has a precisely defined domain and is prevented from crossing its boundaries. The execution engine has no opinion on what to trade, only how to trade it. Alpha agents generate signals but cannot submit orders. Risk agents can veto all upstream decisions but cannot modify strategy logic. Attribution infrastructure consumes execution data but cannot influence execution decisions. These constraints are enforced programmatically at the component boundary level. **Determinism at the Execution Layer** The execution engine behaves identically under all market conditions - normal trading, high volatility, exchange degradation, and partial connectivity. No probabilistic logic is introduced at the execution layer. Probabilistic decisions such as signal generation, venue scoring, and regime classification occur upstream. By the time any decision reaches the execution engine, it has been reduced to a deterministic instruction set. This prevents the class of failures where execution systems behave unexpectedly during market stress - precisely the conditions where reliability matters most. **Ensemble Architecture at the Intelligence Layer** No single model, strategy, or agent is relied upon for system performance. The AI layer is structured as a coordinated ensemble where multiple specialised agents operate in parallel over shared market state. Capital is dynamically allocated across strategy ensembles. Poorly performing components are de-weighted or disabled without disrupting the overall system. This mirrors institutional portfolio construction and ensures the platform survives the strategy degradation cycles that are inevitable in live markets. **Verifiable Attribution Throughout** Every attribution claim the platform makes - from execution metadata to campaign conversion - is programmatically verifiable and auditable. The Syndicate attribution chain is deterministic: a campaign exposure either did or did not produce an attributed trade, and this determination is made by the execution layer, not by self-reporting from KOLs or third-party tracking pixels. This level of rigour is only possible because the platform controls both the attribution source (the campaign) and the attribution anchor (the execution layer). **Continuous Closed-Loop Learning** Every layer generates feedback signals consumed by every other layer. Execution quality data updates AI execution heuristics. Strategy performance updates capital allocation weights. Campaign attribution data updates user acquisition models. The system improves as a function of operating - through structured, supervised adaptation bounded by deterministic safety constraints. ### 1.3 Four-Layer Architecture Overview | Layer | Component | Primary Function | Output | |----------------|------------------------|-------------------------------------------------------|---------------------------------------------| | Execution | Terminal (Rust) | Multi-exchange order lifecycle management | Executed trades + execution metadata | | Intelligence | TA Quant AI (Multi-Agent) | Regime detection, alpha generation, risk control, portfolio orchestration | Trading signals + risk decisions | | Distribution | TA Syndicate | Verified KOL campaigns, fraud-resistant attribution, full-funnel tracking | Attributed volume + user acquisition | | Automation | AISA (AI Strategy Agent) | Autonomous policy-constrained trade execution | Hands-off trading with full audit trail | ## Part II: Terminal - Execution Infrastructure ### 2.1 Execution Engine Design Philosophy The Terminal execution engine is implemented in native Rust. This is not a performance optimisation applied to a system designed in another language - Rust is the foundational design decision from which all other execution layer properties follow. Memory safety without garbage collection, zero-cost abstractions, and ownership semantics deliver microsecond-level order book processing with the deterministic memory behaviour required for consistent low-latency execution under load. **EXECUTION ENGINE PERFORMANCE - 90-DAY BETA** - **Internal routing latency**: sub-10ms under all measured load conditions - **Order throughput**: 900+ orders per second on standard cloud infrastructure - **Average slippage (BTC/USDT perpetual, liquid conditions)**: 4.2 basis points - **Limit order fill rate within spread**: 99.7% under normal market conditions - **System uptime**: 99.94% - two planned maintenance windows, zero unplanned outages - **Memory footprint**: approximately 140MB under full production load ### 2.2 Core Component Architecture **API Gateway Layer** Manages all external communications: user authentication with MFA enforcement, rate limiting, request routing, and TLS 1.3 termination. The gateway is stateless, enabling horizontal scaling without session affinity constraints. **Exchange Connector Service** Maintains persistent connections to 50+ exchanges through independent, sandboxed adapter modules. Each adapter is fully isolated - failure of one adapter cannot cascade to others. Each adapter handles exchange-specific authentication, WebSocket connection management with automatic reconnection and sequence number validation, real-time order book normalisation to the platform's canonical data model, and adaptive rate limit management with circuit breaker logic. **Order Management System (OMS)** The OMS is the central source of truth for order state across all connected exchanges. It maintains the full lifecycle state of every order from pre-submission validation through final reconciliation and provides the complete audit trail required by institutional clients. - Idempotent order submission - duplicate submissions during network recovery are detected and deduplicated, preventing double-fills - Sequence guarantees - causal ordering of order state transitions is enforced; an order cannot skip states - Persistent state - OMS state is written to durable storage before any execution-side action, enabling consistent recovery from any failure - Partial fill handling - partial fills are first-class events; position state updates atomically with each fill report **Market Data Engine** Aggregates and normalises market data across all connected exchanges into a unified, nanosecond-timestamped canonical representation. Maintains full-depth L2 order books in memory for all subscribed instruments, with outlier detection, gap filling, and automatic failover to secondary feeds on primary degradation. Historical data covers 500+ instruments spanning 5+ years. **Smart Routing Engine** Translates venue-agnostic trade instructions from the AI layer into exchange-specific submission decisions. Routing is computed fresh for every order and every slice of a worked order, evaluating four dimensions simultaneously: order book depth and spread, fee and rebate structure, exchange latency profile, and historical fill probability from an ML model trained on live execution data. ### 2.3 Order Lifecycle - Seven Stages Every order passes through a seven-stage lifecycle without exception. No stage can be bypassed. All transitions are logged with nanosecond timestamps to the immutable audit trail. | # | Stage | Detail | |---|--------------------------|------------------------------------------------------------------------| | 1 | Pre-trade validation | Schema validation, account state, instrument tradability, exchange maintenance window check | | 2 | Risk & exposure checks | Position sizing, drawdown check, correlation-aware exposure cap, kill-switch state | | 3 | Venue selection | Smart routing engine scores available venues; decision logged with full scoring breakdown | | 4 | Order type optimisation | Order type selected based on urgency, spread, depth, and maker/taker preference | | 5 | Submission & acknowledgement | Order submitted; idempotency key attached; acknowledgement captured and validated | | 6 | Fill handling | Each fill triggers atomic position update; remaining quantity re-routed if partial | | 7 | Post-trade reconciliation | Execution metadata captured and published to the AI feedback pipeline for continuous learning | ### 2.4 Advanced Order Types | Order Type | Implementation | |---------------------|----------------| | TWAP | Divides total quantity into equal time-slices with randomised timing jitter (+/-15% default) to reduce predictability; auto-pauses when spread or depth conditions deteriorate beyond configurable thresholds | | VWAP | Executes in proportion to historical and live volume patterns; pacing adjusts in real time as observed volume deviates from the historical profile | | Iceberg | Displays only a configurable fraction of total size; the visible portion refreshes immediately on fill; refresh quantities are randomised to avoid detection patterns | | Basket Orders | Executes multiple correlated instruments as a single coordinated operation; computes a submission schedule minimising aggregate market impact; conditional cancellation if any leg fails beyond threshold | | Conditional / Multi-Trigger | Executes when one or more simultaneous conditions are met - price threshold, technical indicator value, cross-market condition, or time window; conditions evaluated at tick frequency | ### 2.5 Portfolio Management & Risk Analytics Positions are maintained in-memory with O(1) lookup and atomic update semantics, providing a real-time, consistent view of portfolio state across all connected exchanges. Risk metrics are computed continuously: | Metric | Description | |---------------------------|-------------| | Value at Risk (VaR) | Historical simulation at 95% and 99% confidence; recomputed on every position change | | Portfolio Greeks | Delta, gamma, vega, theta for derivatives positions; aggregated across all active instruments | | Correlation Matrix | Rolling 30/60/90-day correlation matrices; used by risk agents for exposure cap enforcement | | Margin Utilisation | Per-exchange margin tracked in real time with configurable alert thresholds | | Drawdown Monitoring | Peak-to-trough drawdown at strategy, portfolio, and account levels with automated intervention triggers | | Concentration Risk | Position concentration by asset and strategy measured using Herfindahl-Hirschman Index | ### 2.6 FIX Protocol & API Access The Terminal provides FIX 4.4 and FIX 5.0 connectivity for institutional clients requiring deterministic throughput and integration with existing order management systems. FIX sessions access the same underlying OMS and smart routing engine as REST and WebSocket users - FIX is a transport layer, not a separate execution path. This ensures consistent execution behaviour across all access methods. | Access Method | Protocol | Use Cases | |-------------------|-------------------|-----------| | REST API | HTTPS / JSON | Account management, order submission, portfolio query, historical data | | WebSocket Streams | WSS | Real-time market data, order status, position updates, alert delivery | | FIX Protocol | FIX 4.4 / 5.0 | Institutional order entry, execution reports, market data subscription | | Python SDK | REST + WebSocket wrapper | Systematic strategy integration, async execution, backtesting data | ## Part III: TA Quant AI - Multi-Agent Intelligence System ### 3.1 System Design Overview TA Quant AI is not a single model, signal generator, or bot. It is a coordinated multi-agent system with specialised agents across five functional classes that map directly to the roles of an institutional quantitative trading desk. The architecture enforces a critical invariant: information flows downstream through agent classes - market state feeds alpha, alpha feeds execution, execution feeds risk, risk feeds portfolio - but authority flows upstream. Risk agents can override alpha agents. Portfolio agents can override strategy allocation. Kill-switches override everything. The orchestration layer manages agent scheduling, shared state access through typed interfaces, the authority hierarchy when conflicting outputs are produced, and a complete decision log for auditing and feedback learning. ### 3.2 Agent Taxonomy - Five Functional Classes #### Class 1: Market State & Regime Agents Market state agents classify the current trading environment and condition all downstream agents. No alpha signal is evaluated without regime conditioning - this is enforced architecturally. | Agent | Function | Output | |------------------------------|----------|--------| | Volatility Regime Detector | Classifies current volatility state using realised vol, implied vol, and historical percentile | Regime label, confidence score, expected duration | | Trend / Range Classifier | Determines directional vs. mean-reverting character via Hurst exponent, ADX, autocorrelation | Regime label, strength score, lookback-adjusted confidence | | Liquidity Analyser | Evaluates order book depth, bid-ask spread, and market impact cost across active venues | Liquidity score per instrument per venue; spread regime label | | Event & Anomaly Detector | Monitors for statistical anomalies on volume, spread, and price velocity; tracks scheduled events | Anomaly flag, severity score, recommended action | | Regime Transition Estimator | Estimates transition probabilities between regimes using a Hidden Markov Model | Transition probability matrix, time-to-expected-transition estimate | #### Class 2: Alpha Research Agents Alpha agents generate candidate trading signals. Each agent produces a structured signal object: directional bias, expected return, risk estimate, confidence score, and recommended time horizon. Signals are proposals - they cannot directly cause order submission. Every signal passes through risk and execution agents before any trade instruction is generated. | Strategy Family | Implementation | Regime Fit | |------------------------------|----------------|------------| | Trend Following & Momentum | Multi-timeframe EMA crossovers, ADX-filtered breakouts, rate-of-change ranking | Active in trending regimes; de-weighted in range-bound conditions | | Mean Reversion | Bollinger Band reversals, RSI divergences, Z-score statistical arbitrage across correlated pairs | Active in range regimes; disabled in high-volatility environments | | Volatility Strategies | ATR-based breakout detection, Bollinger squeeze identification, volatility regime positioning | Active during regime transitions; effective around volatility events | | Cross-Exchange Arbitrage | Real-time price discrepancy monitoring; funding rate carry; spot-perpetual basis trading | Regime-agnostic - driven by market microstructure | | Sentiment & Alternative Data | Social sentiment aggregation, on-chain flow analysis, funding rate extremes | Signal modifier and overlay rather than primary signal source | | ML-Enhanced Prediction | LSTM and transformer models for short-horizon direction; gradient-boosted feature models; RL agents for parameter adaptation | Regime label is a feature input; all predictions are regime-conditioned | #### Class 3: Execution Intelligence Agents Execution intelligence agents translate approved trade instructions into optimised execution parameters. They operate at tick frequency consuming live order book data, venue latency metrics, and historical fill performance. Their outputs are execution specifications that the Terminal routing engine implements. - **Order Type Selection**: evaluates urgency, spread, depth, and maker/taker preference to select the optimal order type - **Venue Selection**: scores available venues using the four-factor routing model and produces a quantity-allocated venue ranking - **Maker/Taker Optimiser**: evaluates whether maker rebate justifies execution risk; updates based on current spread relative to strategy slippage tolerance - **Slippage Estimator**: models expected market impact before submission; adjusts size, timing, and venue allocation to maintain slippage within strategy tolerances - **Execution Feedback Agent**: consumes post-trade metadata and updates execution heuristics - the system's primary learning mechanism #### Class 4: Risk & Exposure Control Agents Risk agents are the system's immune system. They operate in parallel with all other classes and have unconditional veto authority - enforced architecturally, not by convention. A risk agent's block decision cannot be overridden by any other agent or strategy configuration. | Control | Implementation | |--------------------------------|----------------| | Position Sizing | Maximum position size per instrument enforced at every order; breaches block the order and generate an alert | | Correlation-Aware Exposure | Portfolio exposure to correlated clusters capped independently of individual position limits; prevents inadvertent concentration during correlation shifts | | Drawdown Management | Progressive intervention: position sizing reduced at 50% of max drawdown threshold, reduced further at 75%; strategy halted at 100% | | Automated Kill-Switch | Triggers on: account drawdown exceeding limit, anomaly detector at critical severity, exchange adapter failure affecting active positions, or manual operator trigger | | Cross-Strategy Exposure | Total portfolio exposure across all running strategies monitored against account-level limit; strategies throttled proportionally when approaching limit | | Liquidation Risk Monitor | Real-time tracking of distance to liquidation price for leveraged positions; automatic de-leveraging trigger at configurable proximity threshold | #### Class 5: Portfolio Orchestration Agents Portfolio orchestration agents manage capital allocation across the full strategy ensemble. Their objective is to maximise risk-adjusted portfolio returns while maintaining return stability - not to maximise any individual strategy. - **Strategy Weighting Agent**: allocates capital using recent Sharpe ratio, volatility contribution, and regime fit score; recalculated daily and after significant portfolio events - **Capital Rebalancing Agent**: identifies allocation drift from differential strategy performance and initiates rebalancing orders; threshold-triggered to avoid excessive transaction costs - **Regime-Conditioned Allocation**: adjusts strategy family weights based on regime classification - trending regimes increase momentum weight, range regimes increase mean-reversion weight; bounded so no strategy family can be zeroed out by regime conditioning alone - **Ensemble Correlation Monitor**: tracks realised correlation between strategy returns; elevated correlation triggers review and potential portfolio exposure reduction ### 3.3 Learning & Feedback Loop Architecture The AI system learns continuously from live execution outcomes. Learning operates on a streaming basis from the execution feedback pipeline - not as a batch process. All learning occurs within predefined parameter spaces and cannot modify core risk controls, increase agent authority, or make structural changes to the agent hierarchy. | Feedback Signal | Consuming Agent | Update Mechanism | |----------------------------------|----------------------------|------------------| | Post-trade slippage vs. estimate | Slippage Estimator | Updates venue-specific slippage model coefficients via gradient descent on prediction error | | Fill rate per venue per order type | Venue Selection Agent | Updates fill probability estimates in the routing scoring function | | Strategy P&L vs. forecast | Portfolio Weighting Agent | Updates alpha confidence scores; outperforming strategies receive higher allocation weight | | Regime prediction vs. realised | Regime Transition Estimator| Updates HMM transition probability matrix from observed regime sequences | | Agent trust scores | Orchestration Layer | Systematically underperforming agents flagged for review; weight in ensemble decisions reduced | ### 3.4 Backtesting Engine The backtesting engine runs against a historical data store covering 500+ instruments at minute-level OHLCV resolution spanning 5+ years, supplemented by tick-level trade data, order book snapshots, funding rates, on-chain metrics, and sentiment indices. - **Vectorised Backtesting**: fast signal-to-return computation for strategy screening and parameter search - **Event-Driven Backtesting**: order-by-order simulation running through the same strategy and risk agent logic that governs live trading - the required methodology for strategies with complex order management - **Monte Carlo Simulation**: generates probability distributions over outcomes across diverse market scenarios for stress-testing and forward-looking performance estimation Execution modelling is realistic: slippage calibrated from live execution data, simulated execution delay, square-root market impact for large orders, realistic partial fill and rejection rates, and funding and borrow costs for leveraged positions. ### 3.5 Strategy Marketplace The strategy marketplace allows external quantitative developers to contribute strategies to the platform. Contributed strategies run in an isolated execution sandbox with no access to account credentials, no direct order submission capability, and no access to other users' position data. Quality gates for marketplace listing: - Minimum 3-year backtest with realistic execution modelling and out-of-sample walk-forward validation - Sharpe ratio of at least 0.8 out-of-sample annualised; maximum drawdown not exceeding 25% - Minimum 90-day paper trading period with performance within 20% of backtest expectation - Code review by the platform quantitative team for look-ahead bias, survivorship bias, and implementation correctness - 30-day limited live deployment at reduced capital before full marketplace availability ## Part IV: AISA - AI Strategy Agent AISA (AI Strategy Agent) is a fully autonomous, capital-executing trading agent that operates under a user-defined policy framework. It is distinct from the TA Quant AI multi-agent research system - where the AI system generates recommendations, AISA acts on them directly, placing orders through the Terminal OMS under the constraints of the user's configured policy. ### 4.1 Distinction from the AI Research System | Dimension | TA Quant AI | AISA | |--------------------|--------------------------------------------------|------| | Primary function | Research, analysis, signal generation, portfolio optimisation recommendations | Autonomous execution - places orders directly under policy constraints | | Capital control | Advisory - outputs are proposals for human or strategy review | Executing - submits live orders through the Terminal OMS | | User interaction | Configure agents, review signals, set allocation parameters | Configure policy, monitor performance, review event log | | Override mechanism | Human or risk agent | User-defined policy; automatic halt on policy breach | ### 4.2 Policy Framework AISA operates under a user-defined trading policy that constrains all autonomous decisions. No AISA action can violate the configured policy - the policy layer has unconditional authority over the agent's execution. Policy parameters include: - Maximum position size per instrument, in absolute terms and as a percentage of account NAV - Maximum total portfolio exposure at any point in time - Instrument whitelist and blacklist - AISA cannot trade outside the permitted universe - Maximum drawdown threshold - AISA self-halts when portfolio drawdown exceeds the configured limit - Trading hours constraints - AISA can be restricted to specific time windows - Minimum signal confidence threshold - only signals exceeding the configured confidence level trigger execution ### 4.3 Event System & Audit Trail AISA maintains a time-ordered event log of all agent decisions, signal evaluations, policy checks, and trade executions. Every AISA action is traceable from its triggering signal through the policy check to the resulting order in the OMS. Events are available in real time through the AISA monitoring interface and retained in full for historical review and compliance reporting. ### 4.4 Trade Attribution AISA-executed trades are tagged in the OMS with the agent identifier and the policy version under which the trade was executed. This attribution enables clean performance analysis that distinguishes AISA-executed trades from manually placed trades and from other strategy-driven trades - providing unambiguous performance attribution at the agent level. ## Part V: TA Syndicate - Attribution Infrastructure ### 5.1 Attribution Architecture TA Syndicate's core technical contribution is a verifiable attribution chain that connects a marketing event to an executed trade on a partner exchange. This chain is deterministic - there is no probabilistic inference. An attributed trade either has a valid, unbroken attribution chain back to its originating campaign event, or it does not count. This level of rigour is only achievable because TA Quant controls both ends of the attribution chain: the campaign origination through the Syndicate platform, and the execution anchor through the Terminal. Platforms that rely on exchange-reported affiliate data without controlling the execution layer cannot achieve this - they are trusting third-party data that is aggregated, delayed, and unauditable. ### 5.2 Four-Layer Attribution Framework **Layer 1: Campaign Event Capture** Every campaign asset carries a unique, cryptographically signed attribution token encoding the campaign ID, KOL ID, asset version, and timestamp with a tamper-preventing signature. When a user interacts with a campaign asset, the token is captured and stored in the attribution event log. **Layer 2: User Journey Tracking** UTM parameter tracking, first-party session continuity, and referral codes maintain attribution as users move from campaign exposure through platform registration, account funding, and first trade. Attribution windows are configurable per campaign. Multi-touch attribution is supported with configurable weighting models including first-touch, last-touch, linear, time-decay, and data-driven approaches. **Layer 3: Exchange Volume Attribution** Terminal-connected accounts are programmatically tagged at registration with their originating attribution token. When a tagged account executes a trade through the Terminal, the trade is attributed to the originating campaign directly from the OMS - not from exchange affiliate reporting APIs. This provides deterministic, real-time attribution data with a complete audit trail. **Layer 4: On-Chain Attribution** For DeFi protocol campaigns, wallet-level tracking provides attribution anchored to the immutable on-chain ledger. Sybil detection filters wallets exhibiting suspicious patterns - same deposit amounts, coordinated timing, or common withdrawal destinations - ensuring that on-chain attribution reflects genuine user activity. ### 5.3 KOL Network Infrastructure **Verification Pipeline** All KOLs pass through an automated verification pipeline before campaign participation: - Audience authenticity analysis: follower growth pattern analysis, engagement rate validation against tier benchmarks, bot detection using proprietary engagement pattern models - Trading account verification: KOLs must connect a live trading account through the Terminal to confirm they are active traders - a differentiating requirement not enforced by competing KOL platforms - Content history review: quality assessment of historical content, previous campaign performance where available, compliance review against platform content standards - Geographic verification: audience demographics verified against claimed geographic focus; significant mismatches result in tier downgrade or rejection **Performance Scoring** Every KOL has a continuously updated performance score computed from: attributed conversion rate from impressions to funded accounts, average trading volume per attributed user, audience retention at 30/60/90 days, and content compliance rate. Scores determine campaign eligibility, compensation rates, and tier classification. ### 5.4 Campaign Management Campaign briefs are structured data objects, not free-form documents. The campaign engine validates each brief against a schema enforcing: objective definition, KOL selection constraints, budget and compensation structure, attribution window, and success metrics. All campaigns are evaluated against executed volume and funded account outcomes - not impressions or follower counts. ### 5.5 Competition Platform The white-label trading competition infrastructure integrates with the Terminal for real-time position tracking and leaderboard computation. Anti-manipulation logic flags positions that offset each other across accounts or that round-trip within implausibly short windows. Automated prize distribution and real-time leaderboards with configurable ranking metrics are included. ### 5.6 Narrative Intelligence Engine An NLP pipeline monitors market narrative formation and provides strategic campaign timing and positioning guidance. Components include real-time sentiment monitoring across social platforms updated at 5-minute intervals, narrative lifecycle tracking from emergence through peak and decay, competitor intelligence on campaign activity and exchange marketing signals, and crisis detection for early warning of reputation threats. ## Part VI: Advanced Automation & Execution Systems Beyond the core Terminal execution and AI intelligence layers, the platform provides four specialised automation subsystems addressing distinct professional trading use cases: exchange algorithmic strategy deployment, market making, high-frequency trading, and general algorithm bot management. ### 6.1 Exchange Algo System The Exchange Algo System is a full strategy deployment and lifecycle management framework for algorithmic strategies that run continuously against live markets. It is distinct from the Terminal's single-order execution algorithms - it is a persistent, managed system that deploys strategies, allocates capital, monitors performance, and provides simulation capability before live deployment. | Capability | Description | |-------------------------|-------------| | Strategy Definition | Define algorithmic strategies with configurable entry/exit conditions, instrument universe, timing parameters, and risk rules | | Capital Allocation | Allocate capital budgets per strategy; track utilisation in real time; rebalance allocations without stopping running strategies | | Simulator | Run strategies against live market data in paper mode before deploying capital; compare simulator vs. live performance to validate strategy behaviour | | Deployment Management | Version-controlled strategy configurations; deployment history; rollback to prior configuration without manual re-entry | | Live Monitoring | Real-time order flow, position state, fill rate, slippage, and latency metrics per deployed strategy | | Post-Hoc Analytics | Performance attribution, execution quality analysis, and comparison across deployment versions | All trade instructions generated by the Exchange Algo System are submitted through the same Terminal OMS path as all other order sources. The OMS applies identical pre-trade validation, risk checks, and smart routing regardless of order source - the Exchange Algo System cannot bypass any execution control. ### 6.2 Market Maker Subsystem The market maker subsystem implements a configurable, inventory-aware quote-posting engine that maintains two-sided markets on specified instruments across connected exchanges. Market making is treated as a first-class strategy type with its own operational framework, not as a generic strategy configuration. **Core Mechanisms** - Quote generation: bid/ask quotes based on a configurable spread model, reference price source (mid-market, VWAP, last trade, or composite), and current inventory position - Inventory management: maximum inventory limits enforced per instrument and per side; the engine actively skews quotes to attract offsetting flow as inventory approaches limits - Rebate optimisation: preferentially places maker orders; monitors fill rates dynamically and adjusts quote aggressiveness to maintain fill rate targets while maximising rebate capture - Reference price management: supports multiple reference price sources per instrument; switchable without restarting the bot A dedicated simulator runs the market maker engine against historical or live data in paper mode, providing realistic pre-deployment validation including queue position simulation, partial fill modelling, and fee and rebate realisation. ### 6.3 HFT Strategy Framework High-frequency trading strategies are managed as a distinct subsystem given their fundamentally different operational requirements: microsecond-sensitive latency targets, order-per-second throughput requirements that exceed the standard strategy framework, and risk controls calibrated to the compressed time horizon. - Dedicated execution fast-path in the OMS for HFT orders; pre-trade validation is maintained but certain analytics steps are deferred to avoid adding latency - Explicit latency targets per order type in strategy configuration; the system monitors achieved latency against targets and alerts when sustained latency exceeds threshold - Per-strategy throughput limits that sum below the Rust engine's capacity envelope, preventing strategy competition for throughput - Risk controls at a faster cycle time than standard risk agents - position limits and drawdown checks on every order, not at bar close - Microsecond-granularity execution quality tracking for latency attribution and strategy optimisation ### 6.4 Algorithm Bots The Algorithm Bots framework provides a general-purpose deployment environment for user-defined automated strategies that do not fit the exchange algo or HFT frameworks. The full bot lifecycle is managed by the platform: creation and configuration through a structured interface, paper mode and simulator testing before live deployment, managed process execution with health monitoring and automatic restart on failure, real-time monitoring dashboards, and complete history of configurations, deployments, and performance retained for audit and comparison. ## Part VII: Markets & Analytics Suite The Markets & Analytics Suite is the platform's market intelligence infrastructure - the data environment in which all trading and strategy decisions are made. It provides professional-grade analytical depth across market data, derivatives intelligence, volume analysis, sentiment, and machine learning feature computation. ### 7.1 Core Market Data - Real-time snapshot: price, volume, and market cap across the full instrument universe updated at tick frequency from exchange feeds - Token details: full profile including exchange listings, on-chain metrics, trading pair analysis, and historical OHLC at configurable resolution - Market screener: configurable screener with price, volume, momentum, volatility, and sentiment filters; results ranked and updated in real time - News hub: aggregated market news with per-asset filtering, sentiment scoring, and configurable alerts for breaking news on monitored assets ### 7.2 Derivatives Analytics Institutional-grade derivatives market intelligence covering the data that professional traders use to understand positioning and identify structural opportunities: | Metric | Coverage | |---------------------|----------| | Funding Rates | Per-exchange perpetual swap funding rates; historical funding time series; anomaly detection for extreme funding environments; configurable alerts | | Open Interest | Aggregate OI by asset and exchange; OI change as a momentum and positioning signal; OI concentration analysis | | Liquidations | Real-time and historical liquidation data; liquidation cluster identification for support/resistance context; cascade risk assessment | | Long/Short Ratios | Top-trader and aggregate long/short ratios from exchanges that publish this data; ratio extremes as contrarian indicators | | Spot-Futures Basis | Basis tracking across instruments; carry opportunity identification; basis convergence monitoring | ### 7.3 Volume Analytics & Anomaly Detection The volume analytics module goes beyond raw volume reporting to provide anomaly detection and wash-trading-oriented analysis - a differentiating capability relative to platforms that report exchange volume uncritically. - Per-exchange volume breakdown: deviations from historical exchange volume share are flagged automatically - Volume anomaly detection: statistical models identify spikes inconsistent with price action, order flow, or historical patterns - Intraday volume profiling: compares intraday distribution against historical profiles to identify abnormal concentration - Cross-exchange correlation: genuine market activity tends to produce correlated volume across venues; wash trading on a single exchange produces uncorrelated volume that stands out in cross-exchange analysis ### 7.4 Sentiment, Momentum & Alternative Indicators | Dashboard | Data & Signals | |----------------------------|----------------| | Sentiment / Fear & Greed | Fear & Greed Index with historical time series; component decomposition (volatility, volume, momentum, social, dominance); threshold alerts | | Momentum | Cross-asset momentum ranking; momentum score per instrument; momentum regime classification (accelerating, peak, decelerating, trough) | | AltSeason Index | Percentage of top altcoins outperforming Bitcoin over trailing periods; historical altseason identification; rotation signals | | Thermograph | Market heat map visualising price performance across the instrument universe on configurable timeframes | | Social Intelligence | Twitter/X mention volume, Reddit engagement, Telegram activity; trending topics; unusual social activity detection per asset | | AI Regime Indicators | Platform-generated regime signals from the AI intelligence layer displayed as contextual overlays in the markets interface | ### 7.5 ML Feature Store The feature store is the quantitative analytics backbone connecting raw market data to machine learning model inputs. Features are computed at different frequencies to match their consumers: tick frequency for execution agents and real-time risk, scheduled updates for strategy allocation and regime detection. The feature catalogue tracks all computed features with metadata - computation logic, update frequency, data dependencies, and consuming models. Distribution monitoring detects feature drift that may indicate data quality issues or market regime shifts requiring model recalibration. ## Part VIII: Paper Trading System Paper trading is a fully featured subsystem that uses the same OMS, strategy framework, and risk controls as live trading. Orders are intercepted at the venue selection stage and fills are simulated from live order book state rather than submitted to an exchange. The OMS processes synthetic fills identically to live fills: positions are updated, P&L is computed, and all downstream analytics are populated. Full order type support is available in paper mode - market, limit, TWAP, VWAP, Iceberg - with the same pre-trade validation and risk checks as live trading. Paper and live portfolios are maintained in parallel and both visible in the same portfolio dashboard. Paper portfolios can be reset to a clean state, with reset history logged for audit. The paper trading engine also powers the Exchange Algo Simulator and Market Maker Simulator, providing realistic pre-deployment validation environments for those subsystems. ## Part IX: System Integration & Data Architecture ### 9.1 Unified Data Infrastructure All platform components share a common data infrastructure layer - the technical foundation for the cross-layer feedback loops that drive system improvement. Every component can read data produced by every other component through typed interfaces, subject to access control. There is no data siloing. | Data Store | Technology | Primary Use | |---------------------|-----------------------------|-------------| | Relational Database | PostgreSQL (HA cluster) | User accounts, strategy configurations, campaign data, audit logs | | Time-Series Database| TimescaleDB | Market data, execution metadata, performance metrics, system telemetry | | Cache Layer | Redis (cluster mode) | Session state, real-time position cache, real-time event pub/sub | | Document Store | MongoDB | Strategy specifications, campaign briefs, KOL profiles, AI model configurations | | Event Streaming | Apache Kafka | Cross-component event bus; guaranteed delivery; ordered event log with replay | | Object Storage | AWS S3 | Historical data archives, model artefacts, audit log cold storage | ### 9.2 Cross-Layer Data Flows The Terminal publishes a post-trade execution event for every completed order containing: instrument, venue, order type, quantity, fill price, reference price at submission, slippage in basis points, time-to-fill, and maker/taker classification. These events are consumed in real time by the AI execution agents for heuristic updating and by the performance attribution system for strategy evaluation. The AI system publishes trade instructions as structured objects containing: strategy identifier, instrument, direction, quantity, urgency level, maximum slippage tolerance, time-in-force, and venue constraints. The Terminal does not execute strategy logic - it executes the instruction it receives. When a Syndicate-attributed user executes a trade through the Terminal, the OMS attaches the attribution token to the trade record at write time - this attachment cannot be stripped or modified by downstream components. ### 9.3 Network Effects & Data Compounding The platform generates four compounding data assets that increase in value with scale and operational time: | Data Asset | How It Grows | How It Improves the System | |-------------------------------|--------------|----------------------------| | Execution Metadata Library | Every trade adds a venue/timing/conditions/slippage data point | Routing ML models become more accurate; slippage estimates tighten; venue scoring improves | | Regime History Database | Every observed regime transition extends the HMM training set | Regime detection accuracy improves; transition timing estimates sharpen | | Attribution Conversion Data | Every campaign adds attributed user conversion and LTV observations | CAC models improve; audience-to-conversion predictions sharpen; budget allocation optimises | | Strategy Performance Registry | Every live strategy generates out-of-sample performance data | Allocation models improve; ensemble construction becomes more sophisticated | ## Part X: Technology Stack ### 10.1 Execution Layer | Component | Technology | Rationale | |----------------------|-----------------------------|-----------| | Execution Engine | Rust | Memory safety without GC; zero-cost abstractions; 900+ OPS throughput; deterministic performance | | Order Book Processing| Rust with lock-free data structures | Microsecond processing requires lock-free concurrent access to shared order book state | | Exchange Adapters | Rust async (Tokio runtime) | Async I/O for hundreds of concurrent WebSocket connections without thread-per-connection overhead | | OMS State | PostgreSQL + Redis | Durable order state in Postgres; fast hot-state access in Redis; Redis pub/sub for event notification | | FIX Engine | Rust (QuickFIX-RS) | Native Rust FIX implementation; consistent latency profile with rest of execution stack | ### 10.2 Intelligence Layer | Component | Technology | Rationale | |----------------------|-----------------------------|-----------| | Agent Orchestration | LangGraph (Python) | DAG execution semantics; explicit state management; conditional branching; LangChain integration | | Agent Implementation | LangChain (Python) | Standardised agent interface; tool use; memory management; multi-provider model access | | ML Models | PyTorch + scikit-learn | LSTM/transformer for price prediction; gradient-boosted trees for routing and regime features | | Strategy Execution | Python (FastAPI) | Strategy logic, signal pipeline, backtesting engine, performance attribution | | Historical Data | TimescaleDB (SQL) | Time-series optimised queries; continuous aggregates for OHLCV; hypertable partitioning | | Feature Store | Redis + PostgreSQL | Sub-millisecond real-time feature access in Redis; historical materialisation in Postgres | ### 10.3 Frontend | Component | Technology | Role | |-----------------|-------------------------------------|------| | Framework | React 18 + TypeScript | Component model; concurrent rendering for real-time data; full type safety | | Build Tool | Vite | Fast development and production builds; native ESM | | Server State | @tanstack/react-query | Server state fetching, caching, synchronisation, and optimistic updates | | API Client | tRPC (TypeScript) | Type-safe API calls with automatic type inference from server schema; zero runtime type errors | | Charting | TradingView Charting Library | Institutional-grade charting; custom indicators; WebSocket data binding | | Workflow Builder| React Flow | Visual node-based editor for AI Hedge Fund flow construction and automation pipeline configuration | | Routing | React Router v6 | Client-side routing; route-level code splitting; protected route enforcement | ### 10.4 Infrastructure & Deployment | Component | Technology | Rationale | |--------------------|-----------------------------|-----------| | Orchestration | Kubernetes (EKS + GKE) | Multi-cloud; horizontal autoscaling; self-healing; declarative configuration | | Cloud | AWS (primary) + GCP (secondary) | Redundancy; best-of-breed services per provider; no single-cloud dependency | | CDN / DDoS | Cloudflare | Edge caching; DDoS mitigation; WAF; bot detection at network edge | | Event Streaming | Apache Kafka (managed) | Guaranteed delivery; ordered event log; replay capability for recovery | | Observability | Prometheus + Grafana | Real-time metrics; custom dashboards; SLO tracking; alerting | | Distributed Tracing| Jaeger | End-to-end request tracing across services; latency attribution; error root cause | | CI/CD | GitHub Actions + ArgoCD | Automated testing; GitOps deployment; progressive rollout | ## Part XI: Security & Reliability ### 11.1 Authentication & Access Control - OAuth 2.0 with PKCE for all user-facing authentication flows; short-lived access tokens with rotating refresh tokens - Multi-factor authentication enforced for all accounts - TOTP required; hardware key (FIDO2/WebAuthn) supported for institutional accounts - Role-based access control with fine-grained permission scopes; institutional accounts support sub-account permission hierarchies - IP allowlisting available for institutional and API-only accounts - Device fingerprinting with trust management: unrecognised devices trigger step-up authentication; trusted devices maintain a configurable trust window - Progressive account lockout on repeated authentication failures with automatic unlock notification - Dedicated token revocation service: all tokens revoked immediately on password change; admin-initiated emergency revocation available ### 11.2 Data Security - AES-256 encryption for all data at rest; 90-day key rotation schedule; keys managed through AWS KMS - TLS 1.3 enforced for all network communications - Exchange API credentials stored encrypted; decrypted only in-memory at execution time; never written to logs - Database field-level encryption for PII, API keys, and financial identifiers - No user funds held by the platform - custody risk is eliminated by design ### 11.3 API Security - HMAC-SHA256 request signing; replay attack prevention via nonce and timestamp validation - Rate limiting at the API gateway: per-user, per-endpoint, and per-IP limits with adaptive throttling - WAF rules at the Cloudflare edge: SQL injection, XSS, and path traversal protection - API key scoping: read-only, trade-only, and withdrawal-restricted key types; institutional sub-keys with further restricted scopes ### 11.4 Security Audit Log An immutable, append-only security audit log records all security-relevant events: authentication attempts, account changes, API key lifecycle events, admin actions, and device registration events. The audit log is tamper-evident, retained for regulatory reporting, and archived to cold storage after the hot retention window. ### 11.5 Reliability Architecture The system is designed for a 99.9% uptime target measured against the execution-critical path. The 90-day beta achieved 99.94%. - Component isolation: exchange adapters run in isolated processes; a crash in one adapter cannot affect others or the core OMS - Circuit breakers: every external dependency has a circuit breaker that opens on sustained failure; open circuits route to fallback behaviour rather than retrying indefinitely - Graceful degradation: the execution engine degrades gracefully under partial connectivity - if smart routing cannot score all venues, it routes to available venues using a simplified scoring function rather than failing the order - Automatic reconnection: all exchange connections reconnect automatically with exponential backoff and jitter; sequence numbers are validated on reconnect to detect and fill any data stream gaps ### 11.6 Incident Management Incidents are classified by severity with predefined escalation paths and response procedures. All significant incidents require a post-incident review whose findings feed directly into system design updates, monitoring threshold adjustments, and operational documentation - incidents are treated as data, not noise. ### 11.7 Compliance | Area | Posture | |---------------|---------| | SOC 2 Type II | Controls designed to SOC 2 standard from inception; certification audit planned for end of Year 1 | | KYC / AML | KYC at account registration; AML monitoring on transaction patterns; FATF travel rule compliance | | GDPR | Data residency controls; right-to-erasure; processing agreements with all sub-processors; DPO designated | | VARA (UAE) | Operating entity maintains VARA compliance posture as a technology platform provider | | Audit Trail | Complete event log retained for all system operations; configurable retention with tiered hot/warm/cold storage | ## Part XII: Performance Benchmarks & Validation ### 12.1 Execution System - 90-Day Beta Results **MEASUREMENT CONDITIONS** Duration: 90 days of continuous live system operation Instruments: BTC/USDT and ETH/USDT perpetuals (primary); 12 additional instruments (secondary) Exchanges: Binance, OKX, Bybit active throughout; 6 additional exchanges partial periods Environment: production system with live trading accounts and real capital | Metric | Measured | Target | Notes | |-------------------------------------|----------|----------|-------| | Internal routing latency (p50) | 6.2ms | <10ms | Order receipt to venue submission | | Internal routing latency (p95) | 9.1ms | <10ms | Met at p95; occasional spikes at p99 in market stress | | Order acknowledgement (p50) | 34ms | <50ms | Includes exchange processing; venue-dependent | | Order acknowledgement (p95) | 87ms | <100ms | Bybit consistently lowest at ~28ms median | | Limit order fill rate | 99.7% | >=99% | Normal conditions; degrades to ~97% in extreme volatility | | Slippage - BTC/USDT perp | 4.2bp | <8bp | Measured against mid-price at signal time | | Slippage - ETH/USDT perp | 5.8bp | <10bp | Wider spread instrument; within target | | Rust engine throughput | 917 OPS | >=900 OPS | Under sustained load; no degradation over 90 days | | System uptime | 99.94% | >=99.9% | Two planned maintenance windows; zero unplanned outages | ### 12.2 AI System Validation - Beta Period All figures are from live paper trading and limited live trading during the 90-day beta. Out-of-sample results use a walk-forward methodology. Past performance is not indicative of future results. | Strategy | Sharpe (OOS) | Max Drawdown | Win Rate | Calmar | Backtest Alignment | |---------------------------------|--------------|--------------|----------|--------|--------------------| | Trend Following - BTC perpetual | 1.42 | 8.3% | 54% | 2.1x | Within +/-15% | | Mean Reversion - ETH/BTC pair | 1.18 | 6.1% | 61% | 2.4x | Within +/-18% | | Volatility Expansion | 0.94 | 12.4% | 48% | 0.9x | Within +/-22% | | Cross-Exchange Arbitrage | 2.31 | 2.8% | 73% | 4.2x | Within +/-8% | | Portfolio Ensemble (blended) | 1.67 | 7.2% | 58% | 2.8x | Within +/-12% | **AI Validation Metric** - **Regime detection accuracy**: 78% correct classification vs. ex-post realised regime labels - **Execution agent slippage improvement**: 38% reduction in slippage vs. naive market order baseline on identical signals - **Risk agent intervention accuracy**: All automated de-risk triggers during beta correctly identified elevated risk conditions in retrospective review - **Learning convergence**: Slippage reduced by 1.8bp from Day 1 to Day 90 - measurable AI learning from live execution feedback - **Ensemble vs. single strategy**: Ensemble Sharpe (1.67) exceeds individual strategy Sharpe adjusted for correlation - diversification benefit confirmed ### 12.3 Independent Technical Review The TA Quant execution engine and AI system architecture has been reviewed by independent technical advisors with institutional trading systems backgrounds. Key findings: - Rust execution engine architecture correctly designed for deterministic, low-latency performance - consistent with institutional-grade execution systems - Agent separation-of-concerns implementation is sound - the boundary enforcement between alpha, execution, and risk agents is architectural, not conventional - Risk agent authority hierarchy correctly implements the veto pattern; no execution path exists that bypasses risk agent evaluation - Data pipeline from execution layer to AI feedback loop correctly implemented - no look-ahead bias identified in the learning system - Idempotency key implementation in the OMS correctly prevents double-submission in all tested failure scenarios ## Part XIII: Development Roadmap **Phase 1 - Foundation (Current)** Core system deployed and validated in beta. Full multi-exchange Terminal connectivity with all advanced order types and FIX engine. All five AI agent classes deployed with full feedback loop architecture live. TA Syndicate attribution infrastructure operational. AISA autonomous agent live. Exchange Algo System, Market Maker, HFT framework, and Algorithm Bots operational. Multi-region cloud deployment with full observability stack. **Phase 2 - Scale** Mobile applications for iOS and Android. Enhanced portfolio analytics and prime brokerage-adjacent features. Strategy marketplace public launch with external developer SDK. Self-serve Syndicate campaign platform. Enhanced narrative intelligence and expanded on-chain attribution for DeFi protocols. Geographic expansion. SOC 2 Type II audit. **Phase 3 - Enterprise** White-label Terminal deployment for exchange partners. DeFi and on-chain execution integration with DEX aggregation and cross-chain routing. Prime brokerage services including credit, margin, and custodian integrations. AI trading assistant with conversational strategy configuration interface. Advanced ML capabilities at the portfolio level. Decentralised attribution using on-chain proofs. **Phase 4 - Platform** Open developer API ecosystem enabling third-party strategy, signal, and analytics providers to integrate through published APIs. Global infrastructure with low-latency execution nodes in all major trading regions. Developer marketplace for approved integrations. TA Quant's long-term vision is to become the comprehensive infrastructure platform where every serious trader, fund, and project operates by default. | Technical Priority | Phase 1 | Phase 2 | Phase 3 | Phase 4 | |------------------------|------------------|---------------------|--------------------------|--------------------------| | Execution latency | Sub-10ms | Sub-5ms target | Co-location partnerships | Sub-1ms co-located | | Exchange coverage | 50+ CEX | CEX + DEX integration | CEX + DEX + OTC | Full market structure | | AI model refresh | Daily cycle | Hourly updates | Real-time tick-level | Continuous adaptation | | Attribution | CEX volume | CEX + DeFi | Multi-chain | Decentralised proof | | Uptime target | 99.9% | 99.95% | 99.99% | 99.99%+ | ## Conclusion The TA Quant architecture reflects a specific thesis about what it takes to build durable infrastructure for professional crypto markets: that execution quality, trading intelligence, growth attribution, and autonomous execution are not independent problems, and that the integration between them is not a convenience but a technical requirement for the feedback loops that make the system improve continuously. Every major architectural decision in this document follows from that thesis. Rust at the execution layer because deterministic performance is non-negotiable under market stress. Multi-agent AI with enforced separation of concerns because single-model trading systems fail in predictable ways that ensemble architectures avoid. Full-funnel attribution anchored to the execution layer because attribution without execution control produces self-reported data. AISA as a policy-constrained autonomous agent because professional traders increasingly require infrastructure that removes the operational burden of trade execution without removing their control. The 90-day beta has validated the core architecture. Sub-10ms internal routing latency, 99.94% uptime, measurable AI learning convergence, and attribution chains fully auditable to the individual trade. These are measured outcomes from a production system operating on live capital - not design targets. The platform compounds. Each revolution of the execution-intelligence-attribution flywheel produces data that improves the next. Each new exchange partnership, attributed user, and strategy deployment makes the system measurably better. The roadmap ahead is an expansion of the same architectural principles into deeper product surfaces and broader markets. **TA QUANT - TECHNICAL WHITEPAPER** **March 2026 - taquant.com** © 2026 TA Quant. All rights reserved.

Read Summary →AISA: TAQuant AI Strategy Agent System